Post has

been moved to https://www.technologyintrend.com/2019/07/hadoop-vs-rdbms.html

Sorry for inconvenience

|

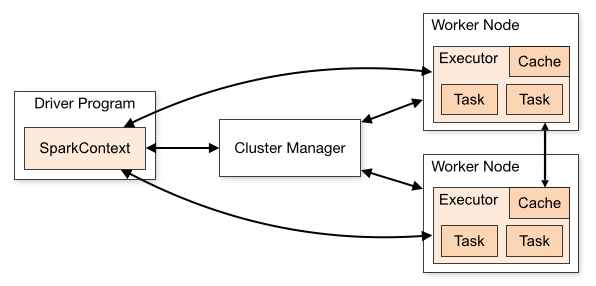

| src: https://spark.apache.org/docs/latest/cluster-overview.html |

Scala Spark

|

PySpark

|

spark-submit \

--class <main-class> \

--master <master-url> \

--deploy-mode <deploy-mode> \

--conf <key>=<value> \

... # other spark properties options

<application-jar> \

[application-arguments]

|

spark-submit \ \

--master <master-url> \

--deploy-mode <deploy-mode> \

--conf <key>=<value> \

... # other Spark properties options

--py-files <python-modules-jars>

my_application.py

[application-arguments]

|

Mode

|

Value of “--master”

|

For Standalone deployment mode

|

--master spark://HOST:PORT

|

For Mesos

|

--master mesos://HOST:PORT

|

For Yarn

|

--master yarn

|

Local

|

--master local[*] :: * = number of threads

|

Client

|

Cluster

|

Driver runs in the machine where the job is submitted.

|

Driver runs inside the cluster. Resource Manager or Master decides which node the driver will run

|

Job fails if the driver is disconnected

|

After submitting the job client can disconnect.

|

Can be used to work with spark in an interactive manner. Performing action on RDD or DataFrame(like count) and capturing them in logs becomes easy.

|

Cannot be used to work with spark in an interactive manner.

|

Jars can be accessed from Client machine.

|

Since the driver runs on a different machine than the client, so the jars present in local machine won’t work. Those jars should be made available to all nodes either by placing them on each node or mention them in --jars or as –py-files during spark-submit.

|

YARN:-

| |

Spark driver does not run on the YARN cluster only executor runs inside YARN cluster.

|

Spark driver and executor both runs on the YARN cluster.

|

The local dir used by driver is spark.local.dir and for executor it is YARN config

yarn.nodemanager.local-dirs. |

The local directories used by the Spark executors and the Spark driver will be the local directories configured for YARN (Hadoop YARN config

yarn.nodemanager.local-dirs) |

Mode

|

Scala

|

PySpark

|

Local

|

./bin/spark-submit \

--class main_class \

--master local[8] \

/path/to/examples.jar

|

./bin/spark-submit \

--master local[8] \

my_job.py

|

Spark Standalone: -

|

./bin/spark-submit \

--class main_class \

--master spark://<ip-address>:7077 \

--deploy-mode cluster \

--supervise \

--executor-memory 10G \

--total-executor-cores 100 \

/path/to/examples.jar

|

./bin/spark-submit \

--master spark://<ip-add>:7077 \

--deploy-mode cluster \

--supervise \

--executor-memory 10G \

--total-executor-cores 100 \

--py-files

my_job.py

|

Yarn Cluster mode

|

./bin/spark-submit \

--class main_class \

--master yarn \

--deploy-mode cluster \ # can be client for client mode

--executor-memory 10G \

--num-executors 50 \

/path/to/examples.jar

|

./bin/spark-submit \

--master yarn \

--deploy-mode cluster \

--executor-memory 10G \

--num-executors 50 \

--py-files

my_job.py

|

Scala

|

val myRdd1=sc.textFile(“file_path”)

|

val myRdd2=spark.read.csv(“file_path”).rdd

|

Python

|

myRdd1=sc.textFile(“File_path”)

|

myRdd2=spark.read.csv(“file_path”).rdd

|

Scala

|

val myRdd1=sc.parallelize(Array(“Bangalore”, “New York”, “London”))

|

Python

|

myRdd1=sc.parallelize([“Bangalore”, “New York”, “London”])

|